AI Overviews and SEO: A CMO's Guide for 2026

- Busylike Team

- 1 day ago

- 12 min read

Your team is probably seeing the pattern already. Rankings hold steady for important terms, content production hasn't slowed, technical SEO is in decent shape, and yet organic traffic either flattens or slips. Pipeline from search gets harder to explain in the weekly dashboard.

That gap is where ai overviews and seo became a board-level issue.

Google didn't just add another SERP feature. It changed the job of search. Instead of sending users to a list of pages so they can assemble their own answer, Google increasingly assembles the answer first and offers links second. For CMOs, that means the old question, “How do we rank higher?” is no longer enough. The better question is, “How do we get selected, cited, and remembered inside AI-generated answers?”

Table of Contents

The Search Landscape Is Not What It Was - The real shift is selection, not just ranking

Understanding AI Overviews and Generative Search - From retrieval to selection - Why classic SEO signals are no longer enough

The Business Impact on Clicks Traffic and Revenue - What changes in the funnel - SEO vs AEO and GEO

A New Strategic Framework Answer Engine Optimization - Citable content architecture - Technical authority signals - Cross-platform presence - Performance measurement

Actionable Tactics for AI Search Visibility - What a B2B SaaS team should build - What an e-commerce team should change - What usually fails

Measuring What Matters in the AI Era - Replace ranking-only reporting

The Search Landscape Is Not What It Was

The old SEO playbook assumed a stable exchange. You publish useful content, earn rankings, and search traffic follows. That exchange is weaker now because the SERP itself is doing more of the work.

AI Overviews have expanded fast enough that this isn't a niche behavior shift. Semrush reported that AI Overviews appeared in 25.11% of queries across a 21.9 million keyword dataset by Q1 2026, with especially heavy concentration in informational searches and long-tail questions, which reshapes the top of the funnel where many brands built awareness through search content (Semrush AI SEO statistics).

That matters because many content programs were built precisely around those terms. Educational blog content, glossary pages, how-to articles, comparison pages, and problem-aware thought leadership used to attract early-stage demand. Now, Google often answers the first question itself.

The real shift is selection, not just ranking

Traditional SEO rewarded visibility in a list. Generative search rewards inclusion in a synthesized answer. Those are related, but they aren't the same.

A page can rank and still lose attention if the Overview resolves the user's question before the click. A brand can also gain disproportionate influence if its content gets cited, summarized, or used as a source for the answer. That changes content strategy, reporting, and budget allocation.

Practical rule: Treat rankings as eligibility. Treat citations as the new battleground.

For CMOs, this is less about reacting to a Google feature and more about adapting to a broader discovery pattern. Users are getting comfortable asking full questions, expecting direct answers, and making shortlist decisions before they ever visit a site. Google AI Overviews are the clearest signal that search has entered a generative phase.

Understanding AI Overviews and Generative Search

A buyer searches for a category question, gets an AI-generated summary at the top of the results, scans a few cited sources, and forms an opinion before your site ever enters the session. That is the operating reality behind ai overviews and seo in 2026.

AI Overviews change the job of search. Search engines used to send users to pages so they could assemble their own answer. Generative search assembles the answer first, then offers supporting sources. For marketers, that shifts the optimization target from ranking alone to selection and citation.

From retrieval to selection

An AI Overview is a generated response built from multiple sources. It is designed to answer the query on the results page, often with cited links, summaries, follow-up prompts, and extracted claims. The user still has paths to click, but the first moment of influence now happens inside Google's interpretation layer.

That matters because visibility is no longer a simple list position problem. A page can rank well and still contribute little if the model does not use it. A page can also shape the user's understanding before the click if it supplies the definition, comparison, statistic, or framework that gets cited.

This is why the shift is broader than one Google feature. Users are adopting answer-first behavior across search, chat interfaces, assistants, and embedded AI tools. CMOs who want the executive version of that shift should review this perspective on the AI-native CMO playbook.

If you want a plain-English primer on the underlying technology, this overview to discover generative AI on YourAI2Day is a useful companion for non-technical stakeholders.

Why classic SEO signals are no longer enough

Keyword targeting, title tags, internal links, and crawlability still matter. They make a page eligible. They do not guarantee inclusion in a generated answer.

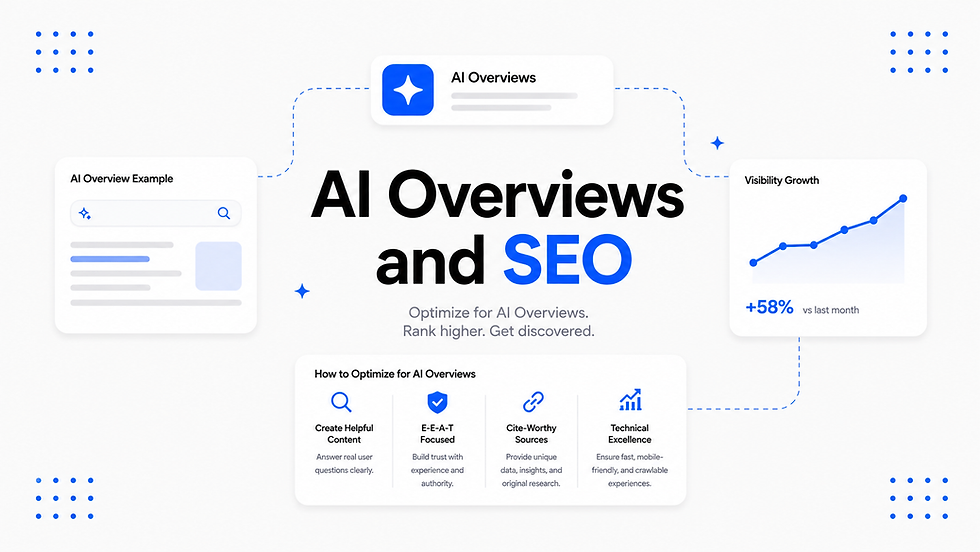

Generative systems reward content that is easy to extract, verify, and reuse. In practice, that means pages need to do four things well:

Answer the question early: Put the core definition, explanation, or recommendation near the top of the page.

Support claims clearly: Use attributable facts, original expertise, and precise language that can be cited without distortion.

Organize information cleanly: Headings, tables, bullet points, and scoped sections help models identify what each passage says.

Cover the decision surface: Strong pages address adjacent questions, trade-offs, exceptions, and alternatives, not just the primary keyword.

I see teams struggle when they keep briefing content around "terms to rank for" instead of "answers to own." That difference sounds small. It changes the page structure, the editorial standard, and the reporting model.

A ranking mindset asks, "How do we get into the top results?" A GEO and AEO mindset asks, "Why would an answer engine choose our page as source material?"

That is the new bar.

Here's a strong walkthrough of how search is evolving visually and behaviorally:

Pages built for the old model often miss it. They hide the answer under brand setup, open with vague thought leadership, or spread one idea across 1,500 words without a clear summary section. Those pages may still rank. They are weaker candidates for citation.

If a model cannot identify your answer quickly, trust it, and quote it cleanly, your ranking alone will not protect your visibility.

The Business Impact on Clicks Traffic and Revenue

A CMO sees the pattern fast. Rankings hold, impressions stay healthy, and organic traffic still slips. Pipeline from educational content gets harder to attribute. Product page visits from non-branded search soften. Nothing looks broken in the old dashboard, but buyer behavior has changed.

The change is simple to describe and expensive to ignore. Search used to reward visibility with a click. AI Overviews often satisfy part of the query before the visit happens. That shifts SEO from a traffic acquisition channel toward a selection and citation channel. If your brand is not chosen as a source, you lose influence before the buyer reaches your site.

That is why AI Overviews should be treated as the front edge of a broader shift in discovery, not as a single Google feature to monitor. The operating question is no longer just, "How do we rank?" It is, "How do we get selected, cited, and carried into the buyer's decision process across answer engines?"

What changes in the funnel

The biggest loss is not only session volume. It is control over early buyer education.

Prospects now learn category definitions, compare approaches, and narrow options inside the results page. By the time they click, many have already absorbed a machine-mediated view of the market. That creates a different funnel shape. Fewer casual visits at the top. More late-stage visits. Less room to frame the problem on your own terms.

The business effects usually show up in four places:

Informational traffic loses scale: High-ranking educational pages can generate less traffic because the answer layer handles more of the query.

Brand framing moves upstream: The vendors cited in AI responses shape category understanding before a prospect visits any website.

Attribution gets less clean: Search can influence pipeline without producing the same click path teams used to report on.

Qualified visits matter more: The click that does happen often comes from a user who is further along and evaluating options, not just learning basics.

There is a trade-off here. Some broad top-of-funnel traffic will decline. But inclusion in the answer layer can improve the quality of downstream consideration because the user arrives with more context and stronger intent. The risk is obvious. If competitors are cited and you are not, they set the shortlist.

For CMOs working through that shift, Busylike's AI CMO guide is useful because it treats AI visibility as a leadership and measurement problem, not just an SEO task. For ecommerce and product-led teams, this piece on how to get products found by AI is also relevant because product discovery is starting to follow the same pattern.

SEO vs AEO and GEO

The reporting model has to match the new buying journey.

Dimension | Traditional SEO (The Old Model) | AEO & GEO (The New Model) |

|---|---|---|

Primary goal | Rank higher in blue links | Get selected and cited in generated answers |

Main unit of visibility | Position on SERP | Presence inside the answer layer |

Core success metric | Clicks from search | Citation share, assisted visits, qualified clicks |

Content approach | Keyword targeting | Question resolution and citation readiness |

User journey | Search, click, read | Search, summarize, shortlist, then click |

Competitive frame | Outrank adjacent pages | Become one of the sources the engine trusts |

Ranking still matters. Selection matters more.

That distinction changes budget decisions. A page that holds position but stops driving visits may still create business value if it is repeatedly used in answer generation, supports branded search growth, and improves conversion from later-stage visitors. A page that ranks well but is rarely cited can look healthy in legacy SEO reporting while losing strategic ground where buying decisions now begin.

A New Strategic Framework Answer Engine Optimization

The practical response is to stop treating AI Overviews as a Google-only anomaly and start operating with a wider AEO and GEO model. Answer Engine Optimization focuses on being selected for direct answers. Generative Engine Optimization expands that mandate across AI-driven discovery environments beyond Google.

This framework is less about chasing one feature and more about building a content and visibility system that machines can reliably interpret.

Citable content architecture

Many content publishers still create pages as if human readers are the only audience. They write long scene-setting intros, hide the answer midway down the page, and mix product messaging with education until neither is clear. That format weakens citation potential.

Citable content architecture starts with answer design. Each page should make the primary answer obvious, then support it with depth. Good pages in this model tend to include short definitions, sectioned explanations, FAQs, examples, and comparison elements that can be lifted cleanly into AI responses.

This is one reason category clusters matter more now. A pillar page gives the broad frame. Supporting pages handle sub-questions with precision. Together, they help the engine understand both topic depth and source consistency.

Technical authority signals

Generative systems still need the same foundation strong SEO has always required. They just use it differently.

Pages that are difficult to crawl, semantically weak, or structurally confusing are less likely to be selected even if the writing is good. Schema, internal linking, semantic headings, tables, bullet lists, and clean indexable architecture all make it easier for systems to retrieve and trust your content. The strategy guidance in Busylike's piece on AI search engine optimization aligns with this reality and is useful for teams updating legacy SEO workflows.

Operating principle: Build pages so a buyer can scan them fast and a model can parse them cleanly.

Cross-platform presence

Many teams are still lagging. They optimize for Google, then assume that work will automatically transfer to every AI surface. Sometimes it does. Often it doesn't.

A broader GEO strategy matters because AI Overview coverage is low for eCommerce at 18.5%, which pushes brands to diversify visibility into platforms like Perplexity and ChatGPT where consideration can happen closer to transactional intent (Capptoo on SEO and AI Overviews). If you're in retail, DTC, software evaluation, or any category where buyers compare options conversationally, limiting your strategy to Google leaves exposure on the table.

For product-led teams, this practical resource on how to get products found by AI is worth sharing with both content and merchandizing stakeholders.

Performance measurement

The final pillar is operational discipline. Teams need a way to monitor whether they appear in answers, which competitors are cited, how product claims are framed, and what topics generate inclusion versus exclusion.

Specialized tracking becomes necessary. Some brands use manual prompt testing, some rely on SEO platforms plus internal query sets, and some use dedicated monitoring tools. Busylike is one example of a partner that helps brands monitor and shape visibility across LLM environments. The important point isn't the vendor. It's the capability.

Without measurement, AEO and GEO turn into opinion. With measurement, they become an operating system.

Actionable Tactics for AI Search Visibility

Strategy only matters if your team can translate it into production habits. The most effective ai overviews and seo programs don't just publish more. They publish in formats that are easier to retrieve, easier to cite, and harder to misinterpret.

A useful benchmark here is that 76.1% of URLs cited in AI Overviews already rank in the top 10, and the pages most favored by LLMs commonly use schema markup, bullet points, and tables, reinforcing that foundational SEO and E-E-A-T still gate entry into AI-generated answers (Position Digital on optimizing for AI Overviews).

What a B2B SaaS team should build

A SaaS company selling workflow software usually has a familiar content mix: product pages, blog posts, comparison pages, and resource hubs. In many cases, the blog is full of broad “what is” content that ranks decently but doesn't get cited because it's vague.

A better approach is to turn core commercial-adjacent questions into answer assets.

For example, instead of one long article on implementation, build a cluster like this:

Decision page: “Workflow automation software for finance teams”

Explainer page: “What finance workflow automation solves”

Comparison page: “RPA vs workflow automation”

FAQ page: “How long implementation usually takes, common blockers, security review considerations”

Proof page: Original documentation on integrations, controls, and process mapping

The writing style matters as much as the topic. Open with a direct answer. Use subheads that mirror actual buyer questions. Include tables where buyers compare options. Add schema where relevant. Keep claims precise.

If your team needs a concrete model for page construction, this guide on structuring content for AI models to effectively cite your brand gives a practical framework.

What an e-commerce team should change

E-commerce teams often make the opposite mistake. They assume product pages are enough. They aren't, especially when buyers ask broad pre-purchase questions in AI interfaces.

A D2C skincare brand, for example, shouldn't rely only on collection and PDP pages. It also needs educational assets that answer category questions with enough clarity to earn citations. Think ingredient explainers, skin concern guides, routine builders, and comparison pages that connect naturally to products without reading like thin affiliate content.

Useful execution patterns include:

Build buying guides: Answer “which product is right for” questions directly.

Add comparison tables: Show differences by use case, not just SKU.

Create glossary content: Define ingredients, materials, or features in plain language.

Support claims carefully: Use consistent language across PDPs, FAQs, and guides so the engine sees one stable narrative.

For enterprise CMS teams working through structured content challenges, Kogifi's Sitecore AI insights offer a practical lens on how content systems affect discoverability.

What usually fails

The failures are consistent enough to spot early.

Keyword-only briefs: If the brief says “target this term” but doesn't define the answer to own, the page usually ends up generic.

Overwritten intros: AI systems don't need a dramatic lead. They need a clean answer.

Thin thought leadership: Broad opinion pieces rarely become citation sources unless they include original frameworks or clearly stated definitions.

Messy page structure: Walls of text are bad for users and worse for retrieval.

Unverified claims: If a page makes sweeping assertions with no clarity around source or evidence, it becomes risky material for answer engines.

Teams that win citations write for retrieval first, persuasion second, and brand style third.

That doesn't mean content becomes robotic. It means the page earns the right to be read by making itself legible to both humans and machines.

Measuring What Matters in the AI Era

A CMO reviews the monthly search report. Rankings are stable. Organic sessions are down. Pipeline looks flat in analytics, but sales keeps hearing, "We saw your brand in the AI answer." That gap is the new measurement problem.

AI search changes the job of SEO reporting. The question is no longer just which keywords you rank for or how many clicks a page earned. The harder, more useful question is whether your brand was selected, cited, and remembered at the moment the engine assembled an answer.

That is the shift from ranking to selection. And it is why GEO and AEO need a different scorecard than classic SEO.

Replace ranking-only reporting

A stronger reporting model tracks visibility at the answer layer and ties it back to demand quality:

Share of answer: How often your brand appears in AI-generated responses for priority prompts, compared with direct competitors.

Citation quality: Whether the engine uses you for definitions, comparisons, recommendations, use cases, or proof points.

Citation framing: The language around the mention. Are you presented as credible, expensive, easy to adopt, enterprise-ready, niche, or high-performance?

Assisted branded demand: Whether branded search volume, direct visits, demo requests, or sales mentions rise after answer visibility improves.

Qualified click yield: Whether fewer visits produce better engagement, stronger conversion rates, or shorter sales cycles because users arrive pre-qualified.

AI Overviews and answer engines compress the path between research and judgment, meaning that by the time someone clicks, the engine may have already shaped the shortlist.

A smaller traffic number can signal better search performance if the visitors arrive with higher intent and clearer context.

The practical trade-off is straightforward. Reporting only on rankings and sessions is easier because the tooling is familiar. Reporting on citation presence, answer influence, and assisted demand is messier, but it reflects how discovery now works. Teams that accept that shift earlier will make better budget decisions.

CMOs do not need to discard traditional SEO metrics. They need to treat them as one layer of the model, not the model itself. In AI-mediated search, the brand that gets quoted, summarized, and recalled has an advantage before the buyer ever reaches the site.

If your team needs a practical plan for AI search visibility, Busylike helps brands audit where they appear in AI-driven discovery, improve citation readiness, and align content, paid media, and AI-native search strategy around measurable business outcomes.

Comments